G-SYNC is a variable refresh rate technology. The refresh rate changes while gaming!

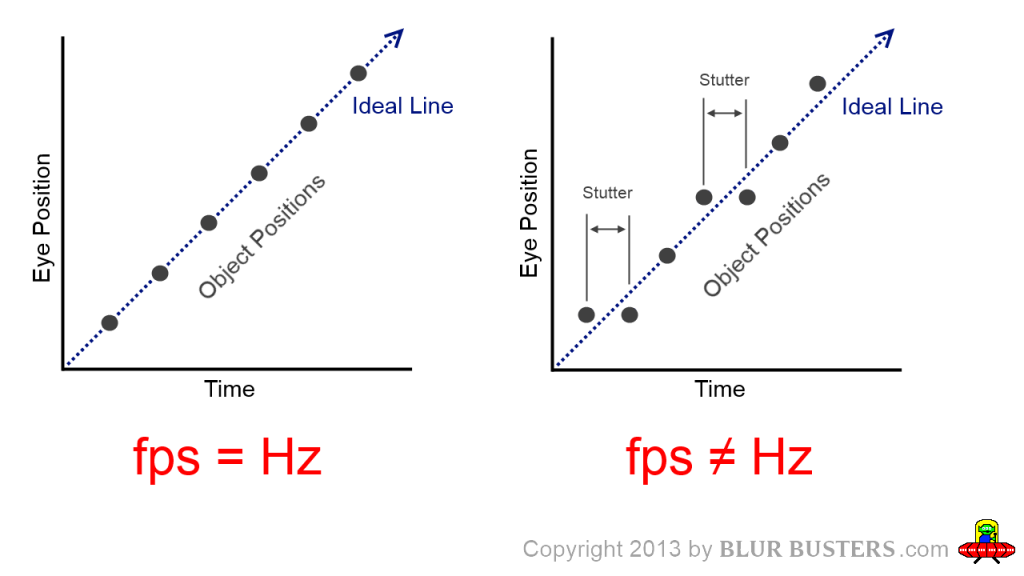

People are very surprised that you can have a fluctuating framerate without seeing any stutters. But, surprisingly, it is possible on a variable refresh rate display such as nVidia’s G-SYNC. These diagrams explain why nVidia G-SYNC eliminates erratic stutters, from the human vision perspective, when tracking eyes on moving objects on a monitor:

Without G-SYNC:

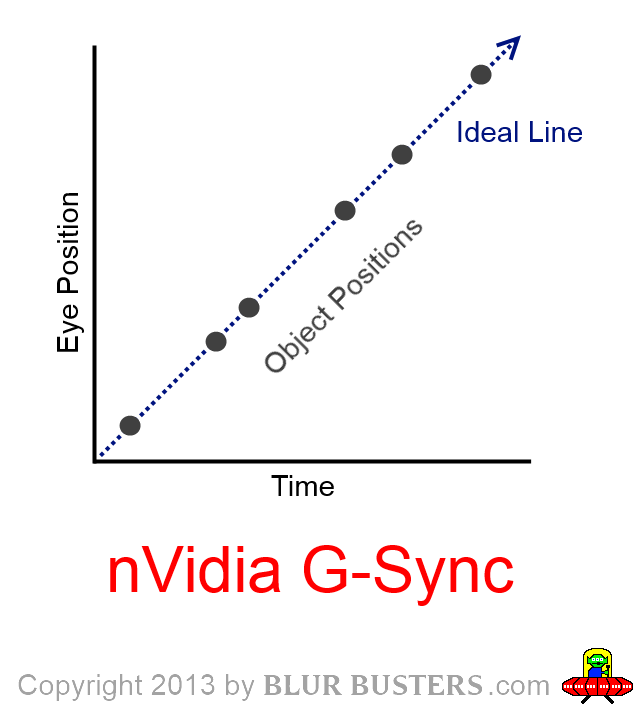

With G-SYNC:

As your eyes track a moving object at a constant speed, the positions of moving on-screen objects are now exactly where they are supposed to be, when using nVidia G-SYNC at any frame rate fluctuating within G-SYNC’s range (e.g. 30fps to 144fps). You do not see erratic stutters during variable-framerate motion.

There is, however, a very minor side effect: Variable motion blur.

– As framerates go down, your eyes perceive more motion blur.

– As framerates go up, your eyes perceive less motion blur.

This effect is self-explanatory in the animations at www.testufo.com where lower framerates looks more blurry than higher framerates (on LCD displays).

How To Get G-SYNC?

See List of G-SYNC Monitors. Also discuss G-SYNC in the Blur Busters Forums!